Automatic Generation of SoC Verification Testbench and Tests

Last month, I blogged about a webinar on embedded systems development presented by Agnisys CEO and founder Anupam Bakshi. I liked the way that he linked their various tools into a common flow that spans hardware, software, design, verification, validation, and documentation. Initially I was rather focused on the design aspects of the flow, noting how the Agnisys solution can assemble a complete system-on-chip (SoC) design and generate RTL code for registers, bus interfaces, and a library of IP blocks. But I was also intrigued by the amount of verification automation in the flow, and so I asked Anupam to fill me in some of the details. He agreed to do so, suggesting that we first look at the big picture and defer the details of which specific tools generate which specific verification models and tests. That made good sense to me.

From the verification viewpoint, the Agnisys flow generates UVM testbench models, UVM-based test sequences that can configure, program, and verify various parts of the design, and C-based tests that can do the same. Of course, the UVM tests run in simulation, but the C tests are more flexible. They can run in simulation on a CPU model, RTL or behavioral, along with the RTL design. They can also run as “bare metal” tests in an emulator, an FPGA prototype, and even the final chip. Thus, the generated tests range from RTL simulation to hardware-software co-simulation to full system validation. In fact, the Agnisys flow even generates formats that can be used to create chip production tests on automatic test equipment (ATE).

Fundamentally, the flow automatically generates three kind of environments:

- UVM environment for verification

- UVM/C based SoC verification environment

- Co-verification environment

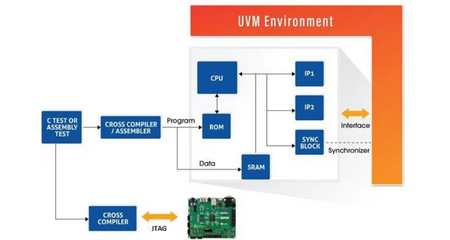

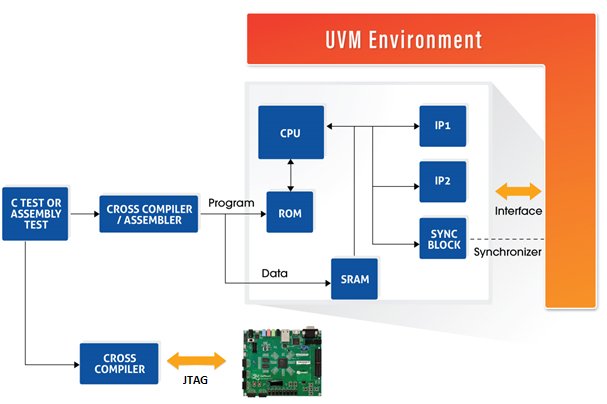

The UVM environment includes generated UVM testbench components for registers, memories, popular bus interfaces such as AXI and AHB, bus bridges and aggregators, and IP blocks such as GPIO, I2C master, timers, and programmable interrupt controllers (PICs). At this stage, the UVM environment might or might not include an embedded processor model. The generated tests use the UVM models and the design’s ports to configure the RTL, stimulate it to perform various functions, and check for correct results and sufficient coverage metrics. Anupam pointed out that these tests verify individual blocks in the chip, and more. Since they access the blocks from the design ports, they also exercise buses, bridges, aggregators, and other types of interconnection logic within the SoC.

The tests automatically generated for this environment are quite sophisticated. The sequences verify all the RTL code generated by tools in the Agnisys design flow, including registers, memories, bus/bridges/aggregators, and IP blocks. These tests are capable of handling interrupts and their corresponding interrupt service routines (ISRs). The tests check the functionality of special registers such as lock register, page register, indirect register, shadow register and alias register. These include positive and negative tests for different access types such as read-write, read-only, and write-only, providing 100% coverage in the UVM environment.

In a UVM/C environment, much of the same verification can be performed by running C tests on a model of the embedded processor (or processors). The Agnisys flow generates a UVM/C based environment that can run both C and UVM tests, including a component that provides synchronization between the two types of tests. There are numerous ways to mix and match these tests, but typically the C code is used to configure the IP blocks while the UVM tests run the bulk of the verification. If the user does not have a processor model available yet, the flow can integrate an RTL SweRV Core EH1, a 32-bit, 2-way superscalar, 9-stage pipeline implementation of the RISC-V architecture.

These generated tests can be used as the start of the SoC regression suite, while the generated UVM environment and models can be the foundation for the complete SoC testbench. The Agnisys approach is flexible; users can specify the sequences needed to configure, program, and verify their own design blocks so that custom tests can also be generated. The flow supports using Python or Excel to develop the sequence/test specifications. Anupam noted that the C tests are often used as the base for device drivers, diagnostics, and other forms of production embedded software. The idea that a single specification can be used to generate tests for UVM, UVM/C, and bare metal is clearly powerful.

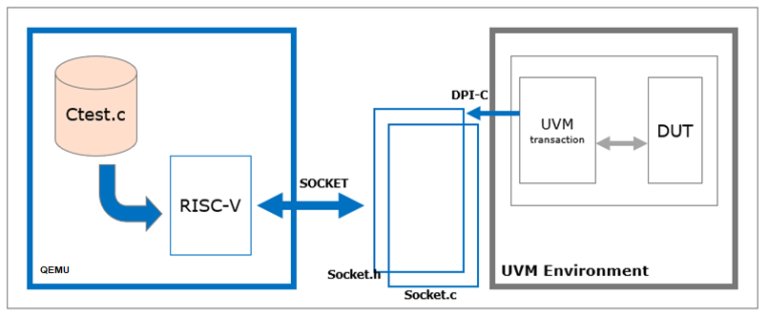

The co-verification environment mixes C and UVM tests in a different way. The C tests run on the QEMU open-source emulator and virtualizer. QEMU is used for emulating the processor behavior and is an especially good vehicle for developing and debugging device drivers for the IP blocks. This approach helps teams develop their tests and driver code without the need for FPGA-based prototypes, so it’s much more cost effective and scalable alternative.

Since ultimately users buy tools to enable this SoC verification flow, I asked Anupam to quickly summarize how their products contribute:

- IDesignSpec™ GDI (IDS) generates UVM models for registers and memories.

- IDS-Verify™ generates a complete UVM testbench with test sequences and coverage.

- Standard Library of IP Generators (IDS-IPGen™) generates tests for its IP blocks.

- Automatic SOC Verification and Validation (IDS-Validate™) generates device driver building blocks and supports bare-metal verification.

- IDS-Integrate™ assembles the complete SoC verified in the flow.

Press Source – https://semiwiki.com/eda/294166-automatic-generation-of-soc-verification-testbench-and-tests/

Overcoming the weaknesses of traditional natural language specifications requires writing the specifications in a precise format rather than natural language, and making this format executable so that tools can generate as many files as possible for the design, verification, programming, validation, and documentation teams. Learn how Agnisys approaches a solution to this challenge that is available today.