Semiconductor Debug: The Schedule Killer

Debug often has been labeled the curse of management and schedules. It is considered unpredictable and often can happen close to the end of the development cycle, or even after – leading to frantic attempts at work-arounds. And the problem is growing.

“Historically, about 40% of time is spent in debug, and that aspect is becoming more complex,” says Vijay Chobisa, director of product management at Siemens EDA. “Today, people are spending even more time doing debug. Debug has a lot of challenges. It has become the task that can increase your tape-out times, or even risk your tape-out.”

That concerns more than engineers. “Debug is on the top of our customers mind all the time,” says Swami Venkat, senior director of strategic programs in the verification group of Synopsys. “It used to be that only engineers cared about debug, but now executives are worried about it because it is impacting the project schedule.”

But to concentrate on debug may be the wrong focus. “Debug itself is a useful task, but if you’re debugging excessively, and if you don’t have the facilities to do efficient debug, then debug itself can be a schedule killer,” says Stelios Diamantidis, director of AI products and research at Synopsys. “Essentially the causes that are making you need excessive debug may be the schedule killer.”

So perhaps the real question is how to reduce or eliminate time spent in debug. “Debug has become the dominant task in verification, which is the dominant part of chip development,” says Anupam Bakshi, CEO of Agnisys. “But debug is only necessary because the design and test-bench contain bugs, so the best way to lessen the impact of debug is to reduce the number of bugs.”

Finding the bug

Debug starts once a problem is found. “The challenge is stimulating the bugs,” says Simon Davidmann, founder and CEO of Imperas Software. “Verification is probably the curse of management because you have to throw as many resources at it as you can afford. You keep doing that until the last possible moment, even when you’re signing off the RTL, even when silicon comes back. It’s a cost function. Bugs can be simple logic functionality bugs through to performance issues.”

Debug challenges constantly evolve, too. “Customer now do the majority of their verification either at the SoC level or when the SoC is put in the system,” says Siemens’ Chobisa. “Block level and IP verification continue to be important, but there are tools and technologies in place where people are comfortable doing validation and debug at that level. This trend of doing validation at the SoC level — when you put things in a system, where you bring the software together with the hardware and verify them together for the purpose of optimizing the entire system —presents new challenges.”

SoC-level verification often involves hardware engines. “Hardware-in-the-loop capabilities continue to improve, and this has helped address the cycle time challenges that lead to long debug cycles,” says Chris Stinson, senior director for test, measurement, and emulation markets at Xilinx. “Additionally, the ability to capture data in real time for analysis, and replay later, also has improved getting to root cause more quickly. Improved memory bandwidth, both on-chip and externally, has played a key role in this advanced capability.”

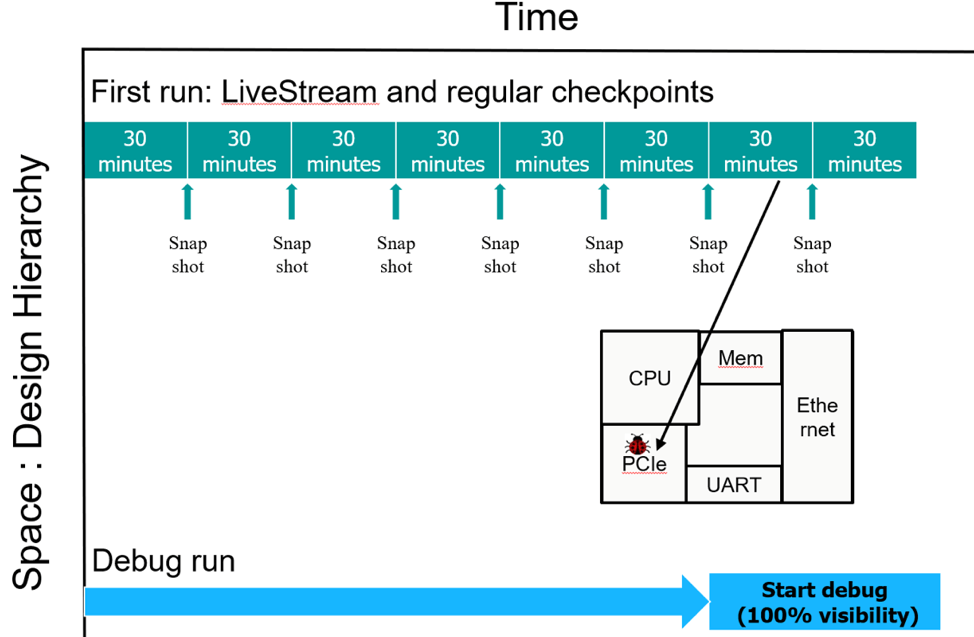

It takes a lot of infrastructure around the emulator or prototyping solution to optimize debug. “Customers want to maximize the efficiency of using hardware resources to get to the point where they can debug a waveform,” says Johannes Stahl, senior director of product marketing at Synopsys. “If I run through billions and billions of vectors before finding an issue, you don’t want to go back to the very beginning of the emulation and run it all again. You need to have a system-level debug methodology, where you are saving the state of the emulation, saving the state of the inputs, all the time. Then, if you have to go back to a prior moment in time, you can do that very quickly.”

Figure 1 shows the solution deployed by emulator vendors.

Fig. 1: Using checkpoint/restore to focus on debug challenge. Source: Siemens EDA

Another problem with hardware execution is repeatability. “At the block level, test benches are deterministic,” says Chobisa. “There are additional challenges when you have physical hardware connected to make the system deterministic. When you’re running a benchmark or software application and you see a problem, and if you run the test again and the problem disappeared, that is not acceptable. When you run the first time, we create a database where we are capturing all the activity happening at the boundary, which is clock cycle-based. After the first run, the physical interfaces are no longer connected. The stimulus is coming from this replay database. And now you will be able to reproduce the cycle-by-cycle behavior. You can also send this replay database to other teams because you don’t need the physical setup.”

These problems can exist on a smaller scale, as well. “In verification, a lot of things are synchronous, a lot of things are order-predictable,” says Imperas’ Davidmann. “And then a few things are more complex. One of the biggest challenges that we’ve seen in RISC-V processor verification has to do with asynchronous events — things that happen in unpredictable time, and maybe in alternate ways.”

A problem with debug that includes software is that runs can be very long. “Where possible, we want short tests,” says Shubhodeep Roy Choudhury, CEO of Valtrix Systems. “This is especially important early on in the verification and debug process, but it needs to scale to longer tests that may include the integration of peripheral drivers in the later stages of design.”

Bugs in the test-bench

Every bug that escapes into a product, or beyond a defined checkpoint in the development flow, is by definition a bug in the test-bench. Verification failed to show the existence of that bug and many companies put policies in place to analyze those bug escapes and determine how they should have detected them so that similar bugs do not escape in the future.

But just like design bugs, the test-bench itself can contain bugs. The reference models can contain bugs, coverage can contain bugs. Very little tracking has been done on these test-bench bugs, and so every verification engineer is left to their own experience.

There are many places where bugs can hide. “It could be a design problem, it could be they didn’t specify that this is something that needs checking, it could be they did specify it but actually the test-bench had a bug, or the coverage reported something which was wrong,” says Davidmann. “Is it a bug in the test bench or a missing capability? If a bug escapes it means you haven’t done a good enough job finding the bugs, which has to do with the stimulus or the test-bench or the measurement of it. It’s the fault of the verification solution.”

When changes are checked in and regression run, the first step is bug triage. “If a customer runs a regression, and 500 of them logged a failure, you really don’t know whether there is a design bug or there is a test bench bug,” says Synopsys’ Venkat. “We take the failed cases and use AI/ML to read the signature of the bugs, and bin them. Of those smaller number of bins, we now apply additional AI/ML technologies to root cause and figure out whether this is a DUT-related bug or a test-bench issue. If it is a test-bench-related bug, then we give them more clues and more pointers to where exactly the bug in the test-bench might be. And there can be a lot of different areas where the bug in the test-bench might be. For example, you might have bugs in UVM class object that might be some dynamic objects, and finding bugs in those dynamic objects might be difficult.”

Avoiding bugs

By far the best way to tame debug is to avoid it. “You’ve got to make sure you debug as little as possible,” says Synopsys’ Diamantidis. “In fact, if you can debug zero, you are being highly successful. The reality is that it’s not generally possible. I would start the thinking process with what is the optimization, and how are you tracking the initial action of getting from A to B, so that you can get as close to B as possible and you have to rely on debug last. When you do rely on debug, have all the information you need to successfully address the problem and then complete your journey.”

Others agree. “If debug is a cancer, then preventive care is the alternative,” says Shekhar Kapoor, director of marketing at Synopsys. “How can you eliminate all the debug and make sure you can practically implement techniques in your design, have more predictability in your design, and adjust from that angle? Data is the lifeblood of debugging, and if you can identify some patterns and opportunities that provide better insights and bring those into your design earlier, then you can prevent these problems. There is an opportunity for new solutions in this area. A lot of scripting is done today, but there could be more intelligent assistance that could be helpful.”

For some aspects of the design, bugs can be effectively eliminated. “One of the main sources of bugs is different teams interpreting the chip specification differently or getting out of sync on spec versions,” says Agnisys’ Bakshi. “Specification-driven IP and chip development can eliminate bugs and keep the teams in sync. For some parts of the chip, hardware RTL design, test-bench components, verification sequences, and user documentation, can be automatically generated from the specification. Specification-driven development is a correct-by-construction methodology that prevents many bugs, and thereby reduces debug time.”

In other areas, such as clock domain or reset domain crossing, dedicated solutions have been created. “Customers have adopted technologies like static verification, where you can find a class of bugs more easily,” says Venkat. “Linting has always been there and has gotten more intelligent. It has become more capable with AI and ML influence. Clock domain or reset crossing problems can now be found earlier, instead of having to run a lot of vectors and trying to debug through gazillion cycles.”

Finding bugs close to the point of insertion can help. “Debug time in chip development can be reduced by finding and fixing bugs earlier in the process, ideally as design and verification engineers’ type in their code,” says Cristian Amitroaie, CEO for AMIQ EDA. “An integrated development environment (IDE) can detect a wide range of errors ‘on the fly’ and reduces debug time for these errors to zero since they can be fixed immediately by the user. In many cases, the IDE also eliminates the effort of resolving the errors by suggesting possible fixes and auto-completion options.”

It all starts with planning. “Verification planning is an essential element to more predictability,” says Rob van Blommestein, head of marketing at OneSpin Solutions. “As part of the plan, verification should be done earlier in the design process to maximize feedback and streamline the debug process. Feedback and understanding of coverage efforts can be further streamlined through tools that provide a single interface for viewing and analyzing data from multiple verification sources, like simulation and formal.”

Including software

Increasing amount of functionality today is defined by software, and while hardware theoretically needs to be able to run any arbitrary software, bringing production software into the picture can help get to the more important areas of system functionality.

“The ability to perform a system-level simulation is critical in identifying challenging software and hardware interaction issues,” says Xilinx’s Stinson. “As design complexity continues to increase, the ability to simulate the number of cycles needed becomes more challenging. This is where FPGAs play an increasingly important role in system-level verification. The ability to model systems in hardware allows design teams to perform more debug cycles per day, as well as dynamically probe different signals in the design as needed. Additionally, with the ability for software and hardware models to interact with each other, designers can reduce their initial system bring up and validation time.”

But emulation and prototyping do rely on RTL being available. “We provided simulation, or a virtual platform, so the software guys had a way of testing the software well before silicon, well before RTL was available,” says Davidmann. “That makes it a lot easier to bring the software up on hardware prototypes, FPGAs, or things cobbled out of previous generation of the design.”

Synopsys’ Stahl agrees with that approach. “If you look at system integration, close to tapeout the focus is to run software on the chip. The best way to avoid an error in the test-bench, which at that point in time is mostly software, is to debug the software up front. For some customers, the answer is to develop their software in a virtual prototype. Then they can get it mostly right before attempting to put it on an emulator with all the RTL around it.”

Still, that does not make hardware/software debug easy. “The software debug task is a task for a software developer, and hardware debug is a task for the hardware verification engineer, and these are not converging,” adds Stahl. “They each require specialized knowledge. You need to understand the hardware, or you need to understand the software stack. Those two groups will never understand the fullness of their respective worlds. When they come together, when you want to find a performance bug, there’s no other way than looking at joint data. But that data should be at a higher level than a Verilog line item, or a software line item. It needs to be at a higher abstraction, maybe at the protocol level, or one of the transactions in the protocol. Those are things where a software person and a hardware person can look together and figure out what’s going on.”

Data abstraction has become a very necessary part of debug. “We have protocol analyzers for standard protocols that collect information and show you the transaction level,” says Chobisa. “You can view it at the PHY level, the transaction level, or the application level.”

New debug issues

By moving to the system level, new debug challenges are emerging beyond basic functionality. Performance already has been mentioned, and power is quickly becoming an area that may require debug attention.

“Emulators have evolved to become the heart of power/performance analysis,” says Chobisa. “You have to start very early when your RTL is barely ready. You want to run the software application, or workload, or benchmark, and see the power profile. You are not worrying about milliwatts or microwatts, but you want to see the power profile when running boot, when executing firmware. What does my power look like when I’m running Angry Bird or Manhattan applications? By looking at this power profile early on in your design verification cycle, you can do something about it.”

New areas are emerging, such as vulnerability to intrusions. “One company modeled their embedded system, then attacked it,” says Davidmann. “They can watch how the software responds. They are effectively doing mutation testing by making things wrong and seeing if the software can realize something’s going on and prevent it.”

Some challenges arise because of new architectures. “If you think about the last 30 years of semiconductor engineering, we’ve had a fairly fixed set of software that came from applications built for compiler/driver stacks, that map to very specific architectures, dominated by x86 in the data center and RISC in mobile devices,” says Diamantidis. “Those went from silicon generation to silicon generation as we leveraged Moore’s law, to make them faster, make them smaller, make them more energy-efficient. That put a lot of nice control points in the design process. In the last three years with the advent of AI, silicon is becoming very domain specific and personalized. As that happens, new requirements are popping up for functionality, architecture, and geometry. You now have to concurrently satisfy conditions in different stages. It’s leading to opportunities to create solutions that are more global, versus stage focused.”

Impact from AI/ML

While AI/ML may be presenting new debug challenges, it is also on the cusp of providing some significant productivity gains. “Machine learning will show us where we were making mistakes and where we could improve,” says Davidmann. “Verification is all about doing things better, or having less bugs. When we find a bug, it should be able to infer why the hole exists. It may not tell you how to fix it. It’s more likely to say, ‘This is where your weaknesses are, and that is where you need to focus.’”

A lot of it is still in the early stages. “There are many different areas where AI and ML technologies can be applied, and we’re seeing very encouraging results from some of the engagements that are already happening with early adopters for that technology,” says Venkat. “Often, what would take a week of manual effort can now be done in a few hours. There has been significant innovation in AI and ML. If debug is the curse, this may well be the cure.”

Others see ML as an important tool, as well. “Machine Learning has its place in these new verification environments, automatically classifying data that allows engineers to make fast and accurate decisions, shortening or even eliminating the debug cycle,” says Hagai Arbel, CEO for Vtool. “An approach that combines machine learning with advanced visualization, and an all-inclusive debug methodology, has been proven to reduce the debug cycle dramatically. The key to enabling next-generation debug is the combination of these technologies in an effective and cohesive manner.”

Debug solutions may look different than the past. “Each designer is holding information a lot closer to the vest,” says Diamantidis. “It’s difficult to create global models that would fit an arbitrary design problem. That is both a curse, meaning it’s frustrating for developers who want to design comprehensive solutions, but there’s also a silver lining to it. If you are able to provide targeted solutions for certain design styles, you typically will not need as much data and your model would not be as complex. We are used to trying to build universal solutions where are one size fits all.”

Conclusion

Debug is not going away, but it is continually evolving. “This is only getting worse,” says Stahl. “We are working very hard to find new solutions to approach the problem. Pushing our engines and debugging tools in the traditional way, will not get us there. We need to do more.”

Overcoming the weaknesses of traditional natural language specifications requires writing the specifications in a precise format rather than natural language, and making this format executable so that tools can generate as many files as possible for the design, verification, programming, validation, and documentation teams. Learn how Agnisys approaches a solution to this challenge that is available today.