Functional Safety and Security in Embedded Systems

Overview

Electronics in general, and embedded systems in particular, become more critical every day. There is hardly a single aspect of our lives that is not controlled, monitored, or connected by embedded systems. Even adventurers exploring the most remote regions of our planet carry satellite phones for emergency contact. The ever-increasing role of electronics places huge demands for functional safety and security in the chips and systems we design. I’d like to explore these two topics a bit and recommend that you view a webinar that we recorded earlier this year for a deeper dive.

Let me start by differentiating the two terms, especially since “safety” and “security” tend to be used almost interchangeably in everyday speech. Functional safety has a specific meaning when applied to electronics and embedded systems: a measure of the system behaving correctly in response to a range of failures. One commonly cited example of such a failure is an alpha particle flipping a memory bit. If this occurs in safety-critical logic, the design must include a mechanism to detect the failure and correct it if possible. Other failure examples include human error, environmental stress, broken connections, and aging effects.

Embedded systems security is quite different. At a high level, security means that no private information can leak out and that no malicious agent can get in to control the system. Proper security is critical for system authentication, confidentiality, integrity, non-reproduction, and access control. While security is often regarded as a software-centric topic, the importance of security in hardware became strikingly evident with the 2018 disclosure of the Meltdown and Spectre vulnerabilities in numerous common microprocessors.

Functional safety and security are important in many applications. Autonomous vehicles are a good example; no one wants a bit-flip to cause a car to steer into opposing traffic, or for an evil hacker to take control and deliberately cause a crash. Nuclear power plants, military systems, and implanted medical devices are a few other applications where both functional safety and security are life-or-death requirements. Satellites and other space-borne applications have elevated safety risks due to cosmic rays. Security is a major concern for Internet-of-Things (IoT) devices since these tend to be installed by individuals with no training in proper configuration. I could cite many such examples.

Standards of Functional Safety

In addition to meeting the needs of their target markets, many applications must also satisfy professional standards. Security is a relatively new area for standardization, so there’s not much in place yet. However, there are several widely adopted standards for functional safety, including:

- ISO 26262 – Automotive

- D0-254 – Avionics

- IEC 61508 – Industrial

- MIL-STD-882 – Military

- IEC 61513 – Nuclear

- IEC 60601 – Medical

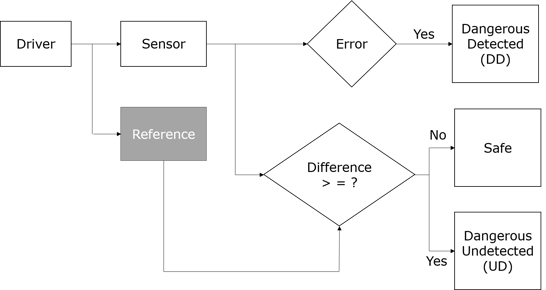

So, what does a hardware designer do to build embedded systems that are both secure and functionally safe? The answer is simple: use well-established techniques to guard against problems, detect them if they do occur, and take appropriate corrective action. Of course, the implementation of these techniques may be a lot harder than this simple answer. In the context of functional safety, the system should detect a fault in critical logic. If the fault remains undetected and does not lead to any deviation from the expected (reference) behavior, the system is considered safe. However, an undetected fault that causes a deviation is a serious concern since it could cause improper operation or even system failure.

Figure 1: Flowchart for Safe Design

Over the years, designers have developed several techniques for detecting and sometimes correcting faults that might compromise functional safety. These include:

- Adding a parity bit to detect a changed value

- Calculating and checking a Cyclic Redundancy Check (CRC) to detect a changed value

- Using an approach such as Single Error Correction Double Error Detection (SECDED) both to detect and correct a value change

- Implementing Triple Modular Redundancy (TMR) so that two correct values will “outvote” an incorrect value

There are also several standard techniques to provide security for embedded systems at the hardware level, including:

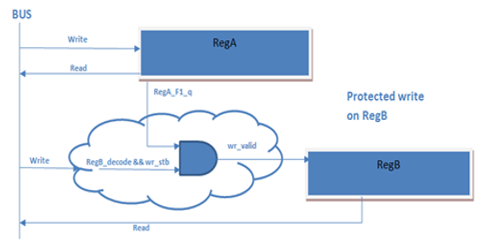

- Protecting software access to a critical register by using a field in a “lock” register

- Encrypting and decrypting sensitive data using the Advanced Encryption Standard (AES)

- Ensuring that only authorized programs can access a specific region of memory

- Authenticating a message and checking its data integrity with a Hash-Based Message Authentication Code (HMAC)

- Modifying a hardware function to obfuscate its functionality

Figure 2: Representation of a Lock Register

Of course, there are a lot of implementation details behind these two lists. To find out much more about these techniques, I invite you to sign up for our webinar here. Both functional safety and security are critical topics for embedded systems in many applications, so learning how to design hardware properly is important. I think you’ll find the information interesting and valuable.